Instagram allows teen users as young as 13 to find potentially deadly drugs for sale in just two clicks, according to a Tech Transparency Project (TTP) investigation that adds to mounting questions about the dangers the platform poses to children.

TTP created multiple Instagram accounts for minors between the ages of 13 and 17 and used them to test teen access to controlled substances on the platform. Not only did Instagram allow the hypothetical teens to easily search for age-restricted and illegal drugs, but the platform’s algorithms helped the underage accounts connect directly with drug dealers selling everything from opioids to party drugs.

The findings highlight another aspect of Instagram’s failures when it comes to children. The platform, now part of Facebook parent company Meta, has faced increasing criticism and scrutiny over its impact on the mental health of young people following revelations from Facebook whistleblower Frances Haugen. As first reported in The Wall Street Journal, Facebook knew for years that Instagram was toxic for many teens, including girls experiencing body image issues, based on its own internal company research.

Now, with Instagram’s chief executive Adam Mosseri set to testify before a Senate panel this week about the platform’s harms to children, TTP’s new research is highlighting another way Instagram can endanger kids—by providing a pipeline to illicit drugs.

TTP’s investigation found that:

- When a hypothetical teen user logged into the Instagram app, it only took two clicks to reach an account selling drugs like Xanax. In contrast, it took more than double the number of clicks—five—for the teen to log out of Instagram.

- Instagram bans some drug-related hashtags like #mdma (for the party drug ecstasy), but if the teen user searched for #mdma, Instagram auto-filled alternative hashtags for the same drug into the search bar.

- When a teen account followed a drug dealer on Instagram, the platform started recommending other accounts selling drugs, highlighting how the company’s algorithms try to keep young people engaged regardless of dangerous content.

- Drug dealers operate openly on Instagram, offering people of any age a variety of pills, including the opioid Oxycontin. Many of these dealers mention drugs directly in their account names to advertise their services.

- Despite Instagram’s pledge to make all under-16 accounts private by default, TTP found that was only true of accounts created through the mobile app; under-16 accounts created through the Instagram website were public by default.

Researchers and lawmakers have been raising alarm over the problem of drug dealing on social media for years. In an April 2018 speech, then-Food and Drug Administration Commissioner Scott Gottlieb warned that tech companies aren’t doing enough to root out illegal opioid sales, calling out Instagram, Facebook, and other platforms by name.

In response to a September 2018 Washington Post report about Instagram’s burgeoning role as a drug marketplace, a Facebook vice president at the time, Carolyn Everson, said the company wasn’t yet “sophisticated enough” to distinguish between posts selling illegal drugs and “taking Xanax cause they are stressed out.” She added: “Obviously, there is some stuff that gets through that is totally against our policy, and we’re getting better at it.”

But as TTP found in its new research, Instagram is still allowing drug dealers to do business on the platform, in violation of its Community Guidelines which prohibit the “buying or selling non-medical or pharmaceutical drugs.” What’s more, Instagram allows teens—one of its most vulnerable user populations—to jump into this marketplace with no guardrails.

Two Clicks

TTP’s investigation involved setting up seven teen accounts: one for a 13-year-old, two for 14-year-olds, two for 15-year-olds, and two for 17-year-olds. The accounts contained no profile photos or information and posted none of their own content. Some of the accounts used the names of fictional characters from popular television shows, like Lisa Simpson from “The Simpsons,” and Michael Scarn, a personality of character Michael Scott on “The Office.”

In all cases, despite the fact that these were minor accounts, Instagram did nothing to prevent them from searching for drug-related content—and the platform’s automatic features even sped up the process.

For example, when one of our teen users started typing the phrase “buyxanax” into Instagram’s search bar, the platform started auto-filling results for buying Xanax before the user was even finished typing. When the minor clicked on one of the suggested accounts, they instantly got a direct line to a Xanax dealer. The entire process took seconds and involved just two clicks. The pattern repeated with “buyfentanyl.” (Pills laced with fentanyl are a rising factor in drug overdose deaths for teens and adults in many parts of the country.)

Instagram’s efficiency in directing teen users to drugs contrasts with the more complicated process of logging off the platform. From the same starting point of the Xanax-buying teen, if a user wanted to log out, they had to first click their profile icon on the bottom right, then click the hamburger menu on the upper right, then click settings and scroll to the bottom, then click “log out,” and click “log out” again.

Two clicks to find Xanax

Two clicks to find Xanax

The five clicks required to log off—in contrast with the two clicks to find drugs for sale—suggest something about Instagram’s priorities: It selectively deploys friction to keep users engaged on the platform, but not to stop teen users from finding dangerous content like drugs.

Instagram allows accounts like alcohol brands to restrict content to people over a certain age, but it’s entirely voluntary. Drug dealers don’t tend to ask for identification, and none of the drug-related content encountered in TTP’s investigation had any age restrictions.

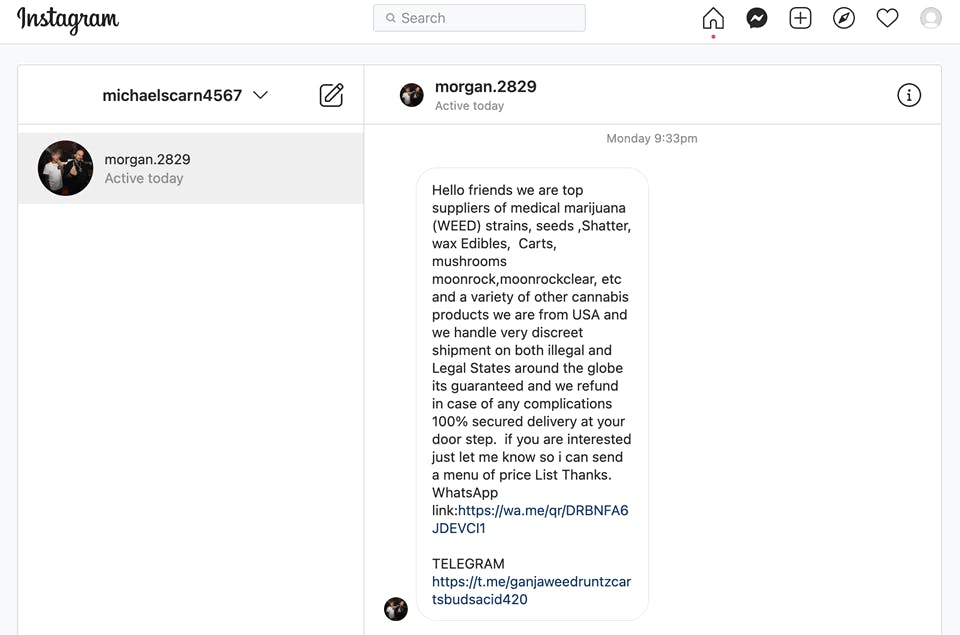

At times, drug dealers actively reached out to TTP’s teen users. The same minor user who followed a Xanax-selling account recommended by Instagram received a series of unsolicited phone calls from the dealer looking to make a sale. In another case, after a minor user followed an Instagram account selling drugs, the dealer sent a direct message with a menu of products, prices, and shipping options without waiting for the buyer to initiate a conversation.

Hashtag workarounds

Instagram has banned some drug-related hashtags, but amplifies other hashtags for the same drugs, making it easy for teens to find them.

For example, Instagram removed the hashtag #mdma, for the party drug MDMA or ecstasy. But when one of our teen accounts typed #mdma in the Instagram search bar, the platform auto-filled alternative hashtags for the drug, including #mollymdma, which incorporates “molly,” a slang term for MDMA.

Instagram is not only missing these easy-to-identify alternative drug hashtags, but is actually suggesting them to users, undermining its own enforcement efforts.

Instagram’s algorithms can also point children to different kinds of drugs.

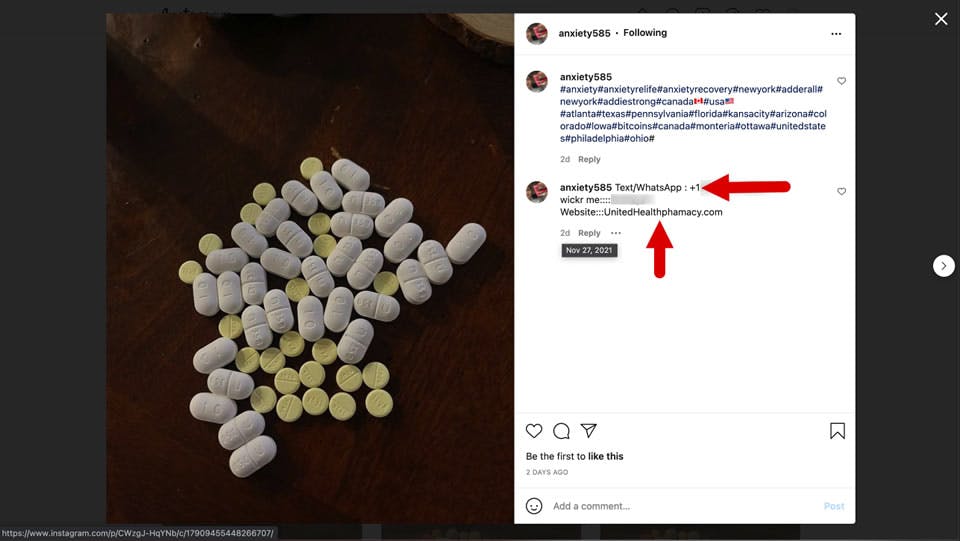

Using an account for a 15-year-old, TTP began by searching for how to buy Xanax, and followed one of the accounts suggested by Instagram. Instagram then suggested more accounts to follow, including one selling Adderall, the prescription stimulant. Following that account led to more suggestions, including one for another Adderall account. It’s easy to see how a teen could be led down a rabbit hole of prescription drugs on the platform.

During the course of TTP’s investigation, our researchers submitted 50 posts to Instagram that appeared to violate the company’s policies against selling drugs. After review, Instagram responded that 72% of the flagged posts (36) did not violate its Community Guidelines, despite clear signs of drug dealing activity.

In the end, Instagram removed 12 of the posts and said it took down an entire account that had violated company policy. However, when TTP checked later, the account in question was still active (along with its violating post). Instagram’s review of one other post flagged by TTP was still pending.

Among the content that Instagram determined did not violate its policies: the Xanax-selling account that made multiple unsolicited calls to our hypothetical teen user.

‘Pharmacy’ networks

TTP also found a number of Instagram accounts that point users to questionable “pharmacy” websites.

One collection of Instagram accounts hawking various types of pills directs users to a website called unitedhealthphamacy.com, which sells drugs including the opioid Oxycodone, stimulants, and ecstasy. Recent data released by the Centers for Disease Control and Prevention found that drug overdose deaths in the U.S. topped 100,000 during the year ended April 21, a record high driven by opioids such as fentanyl.

Domain information shows that unitedhealthpharmacy.com was created on May 2021. The only contact information on the website is a WhatsApp number. (WhatsApp is also part of Meta, the parent company of Facebook and Instagram.)

Another network of drug-related Instagram accounts—with hashtags like #oxycotton (a riff on Oxycontin) and #perc (short for Percocet)—pointed users to a website called unitedonlinepharma.com, created on Oct. 31, 2021. The site includes a “trending deals” section offering everything from ecstasy pills to vials of the club drug ketamine.

These “pharmacy”-promoting networks appear to violate Meta's prohibition against so-called coordinated inauthentic behavior. The company’s policy on inauthentic behavior states that its platforms “do not allow people to misrepresent themselves,” use fake accounts, or “engage in behaviors designed to enable other violations under our Community Standards.” Instagram announced new measures to combat such activity in August 2020.

Meta said it “took action” on 2.7 million pieces of drug content on Facebook and Instagram in the third quarter of 2021, with the company itself identifying 96.7% of that content. But those numbers don’t account for drug content that may have been missed by Facebook’s artificial intelligence systems or gone unreported by users—a potentially vast number.

TTP did not purchase any drugs for sale on Instagram, and it’s possible that some of the drug accounts are scams. But media reports indicate that teens regularly buy pills on social media, often with deadly results. An August 2020 report from ABC News Australia described drug dealers making heavy use of Instagram, with one calling it a “top place to sell.”

Privacy loophole

TTP’s drug investigation turned up another issue—around Instagram’s failure to fully protect the privacy of teens.

On July 27, 2021, Instagram announced that when users under 16 create a new account, the company would make them private by default. The policy change was prompted by company research, which found that young people “appreciate a more private experience,” according to Instagram. But TTP found there’s a major loophole.

As part of its new research, TTP created two 15-year-old Instagram accounts, one through the Instagram app and one through the Instagram website. While the account created through the app was indeed set to private by default, the account created via the website was set to public by default—the opposite of what the company promised. See how it works in the video below.

Meanwhile, the 15-year-old account created through the app—which was set to private by default—wasn’t entirely private, TTP found. A closer look at the account’s settings revealed that it was set to allow tagging from anyone by default, meaning that any person could tag the 15-year-old in photos.

These default settings run contrary to Instagram’s July announcement, which claimed that the company’s new policies would make it “harder for potentially suspicious accounts to find young people” and “stop young people from hearing from adults they don’t know, or that they don’t want to hear from.”

PR rinse-repeat

As noted above, Instagram’s problems with drug dealing have persisted for years.

In May 2020, a whistleblower complaint to the Securities and Exchange Commission made the case that Facebook and Instagram were aware of illegal opioid sales on their platforms and had misled shareholders about the extent of the activity. A few months later, Sens. Joe Manchin (D-W.Va.) and John Cornyn (R-Texas) introduced the See Something, Say Something Online Act, seeking to hold tech companies accountable for facilitating the sale of illegal and controlled substances.

Despite the mounting concern, however, Instagram has used nearly identical boilerplate language in responding to reported drug violations, restating its written policies and putting the onus on users to flag abuses—without addressing the company’s own responsibility for policing its platform.

You can see this pattern in the interactive graphic below: